Changing PolicyKit settings per user

I have been hit twice by a required authentication on my workstation after the Wifi connection got lost and it is clearly irritating, especially when you are not around. The authentication requests are handled by PolicyKit (polkit for short) and can be tweaked.The message by which I was hit was the following: "System policy prevents modification of network settings for all users."

Before you get started, the system wide configuration files that contain the default values reside inside the /usr/share/polkit-1/actions/ directory. In this directory resides the file org.freedesktop.NetworkManager.policy which contains all the default actions. It does also contain the message about the network settings for which the action id is "org.freedesktop.NetworkManager.settings.modify.system." At this point I was still clueless of what I was supposed to do.

After having search the web for information about PolicyKit I have found one interesting article that helped me getting done with my issue and learning more about this authorization framework. This action being very seldom to perform, I'm summing up everything here.

There are two useful commands to perform tests with PolicyKit, pkcheck and pkaction.

The first interesting command to use is pkcheck. It will trigger an authorization request and prompt you to type in a password, simply return true if no authorization is required otherwise false. For example:

pkcheck --action-id org.freedesktop.NetworkManager.settings.modify.system \You have to adapt the process and user parameters of course.

--process `pidof gnome-session` -u `id -u`

Next the command pkaction can be used to print the default system values, for example:

pkaction --action-id org.freedesktop.NetworkManager.settings.modify.system \Now to have a custom setting for your user, what has to be done is to create a PolicyKit Local Authority file inside the directory /var/lib/polkit-1/localauthority/. Here is an example:

--verbose

[Let user mike modify system settings for network]I have saved this file under /var/lib/polkit-1/localauthority/50-local.d/10-network-manager.pkla.

Identity=unix-user:mike

Action=org.freedesktop.NetworkManager.settings.modify.system

ResultAny=no

ResultInactive=no

ResultActive=yes

There are three main values you can pass to ResultActive that are no, auth_admin or yes. Respectively it will deny the authorization, ask for a password, and give access. For further information about the possible values check the polkit manpage, also don't miss the pklocalauthority manpage to read more about the localauthority tree structure.

Changing PolicyKit settings per user

I have been hit twice by a required authentication on my workstation after the Wifi connection got lost and it is clearly irritating, especially when you are not around. The authentication requests are handled by PolicyKit (polkit for short) and can be tweaked.The message by which I was hit was the following: "System policy prevents modification of network settings for all users."

Before you get started, the system wide configuration files that contain the default values reside inside the /usr/share/polkit-1/actions/ directory. In this directory resides the file org.freedesktop.NetworkManager.policy which contains all the default actions. It does also contain the message about the network settings for which the action id is "org.freedesktop.NetworkManager.settings.modify.system." At this point I was still clueless of what I was supposed to do.

After having search the web for information about PolicyKit I have found one interesting article that helped me getting done with my issue and learning more about this authorization framework. This action being very seldom to perform, I'm summing up everything here.

There are two useful commands to perform tests with PolicyKit, pkcheck and pkaction.

The first interesting command to use is pkcheck. It will trigger an authorization request and prompt you to type in a password, simply return true if no authorization is required otherwise false. For example:

pkcheck --action-id org.freedesktop.NetworkManager.settings.modify.systemYou have to adapt the process and user parameters of course.

--process `pidof gnome-session` -u `id -u`

Next the command pkaction can be used to print the default system values, for example:

pkaction --action-id org.freedesktop.NetworkManager.settings.modify.systemNow to have a custom setting for your user, what has to be done is to create a PolicyKit Local Authority file inside the directory /var/lib/polkit-1/localauthority/. Here is an example:

--verbose

[Let user mike modify system settings for network]I have saved this file under /var/lib/polkit-1/localauthority/50-local.d/10-network-manager.pkla.

Identity=unix-user:mike

Action=org.freedesktop.NetworkManager.settings.modify.system

ResultAny=no

ResultInactive=no

ResultActive=yes

There are three main values you can pass to ResultActive that are no, auth_admin or yes. Respectively it will deny the authorization, ask for a password, and give access. For further information about the possible values check the polkit manpage, also don't miss the pklocalauthority manpage to read more about the localauthority tree structure.

Changing PolicyKit settings per user

I have been hit twice by a required authentication on my workstation after the Wifi connection got lost and it is clearly irritating, especially when you are not around. The authentication requests are handled by PolicyKit (polkit for short) and can be tweaked.The message by which I was hit was the following: "System policy prevents modification of network settings for all users."

Before you get started, the system wide configuration files that contain the default values reside inside the /usr/share/polkit-1/actions/ directory. In this directory resides the file org.freedesktop.NetworkManager.policy which contains all the default actions. It does also contain the message about the network settings for which the action id is "org.freedesktop.NetworkManager.settings.modify.system." At this point I was still clueless of what I was supposed to do.

After having search the web for information about PolicyKit I have found one interesting article that helped me getting done with my issue and learning more about this authorization framework. This action being very seldom to perform, I'm summing up everything here.

There are two useful commands to perform tests with PolicyKit, pkcheck and pkaction.

The first interesting command to use is pkcheck. It will trigger an authorization request and prompt you to type in a password, simply return true if no authorization is required otherwise false. For example:

pkcheck --action-id org.freedesktop.NetworkManager.settings.modify.system \You have to adapt the process and user parameters of course.

--process `pidof gnome-session` -u `id -u`

Next the command pkaction can be used to print the default system values, for example:

pkaction --action-id org.freedesktop.NetworkManager.settings.modify.system \Now to have a custom setting for your user, what has to be done is to create a PolicyKit Local Authority file inside the directory /var/lib/polkit-1/localauthority/. Here is an example:

--verbose

[Let user mike modify system settings for network]I have saved this file under /var/lib/polkit-1/localauthority/50-local.d/10-network-manager.pkla.

Identity=unix-user:mike

Action=org.freedesktop.NetworkManager.settings.modify.system

ResultAny=no

ResultInactive=no

ResultActive=yes

There are three main values you can pass to ResultActive that are no, auth_admin or yes. Respectively it will deny the authorization, ask for a password, and give access. For further information about the possible values check the polkit manpage, also don't miss the pklocalauthority manpage to read more about the localauthority tree structure.

Update the GeoIP database

GeoIP is a proprietary technology provided by MaxMind that allows the geolocalization of IPs. It provides databases as both free and paid solutions with IP records matching the country and the city. The GeoLite Country database can be downloaded for free and is updated about once a month.The database can be used with the command line tool geoiplookup

First download and install the latest database and license under your home directory, for example ~/.local/share/GeoIP/. Make sure to decompress the database with gunzip. The directory has to contain these files:

GeoIP.datNext create an alias for the command geoiplookup, for example through your ~/.bashrc script put the following line:

LICENSE.txt

alias geoiplookup='geoiplookup -d $HOME/.local/share/GeoIP/'

And done! But why all the hassle? Because your system may not provide the updates on a regular basis. Of course you can set up a scheduled task to download the database right into your home directory.

Update the GeoIP database

GeoIP is a proprietary technology provided by MaxMind that allows the geolocalization of IPs. It provides databases as both free and paid solutions with IP records matching the country and the city. The GeoLite Country database can be downloaded for free and is updated about once a month.The database can be used with the command line tool geoiplookup

First download and install the latest database and license under your home directory, for example ~/.local/share/GeoIP/. Make sure to decompress the database with gunzip. The directory has to contain these files:

GeoIP.datNext create an alias for the command geoiplookup, for example through your ~/.bashrc script put the following line:

LICENSE.txt

alias geoiplookup='geoiplookup -d $HOME/.local/share/GeoIP/'

And done! But why all the hassle? Because your system may not provide the updates on a regular basis. Of course you can set up a scheduled task to download the database right into your home directory.

SPAM-ips.rb

I'm sharing a small script that allows to scan IPs against Whois and GeoIP databases. It allows to quickly retrieve the geolocation of the IPs and print statistics, so that you know from where the connections are originating from. The Whois information is stored inside text files named whois.xxx.yyy.zzz.bbb.You can download the script here.

Example:

• Usage

$ spam-ips.rb --help

Usage: /home/mike/.local/bin/spam-ips.rb ip|filename [[ip|filename] ...]

• First we retrieve some IPs

$ awk '{print $6}' /var/log/httpd/access.log > /tmp/ip-list.txt

• Now we run the script with the list of IPs inside the text file

$ cd /tmp

$ spam-ips.rb ip-list.txt

Scanning 18 IPs... done.

xxx.zzz.yyy.bbb GeoIP Country Edition: IP Address not found

xxx.zzz.yyy.bbb GeoIP Country Edition: BR, Brazil

xxx.zzz.yyy.bbb GeoIP Country Edition: AR, Argentina

xxx.zzz.yyy.bbb GeoIP Country Edition: SE, Sweden

xxx.zzz.yyy.bbb GeoIP Country Edition: CA, Canada

xxx.zzz.yyy.bbb GeoIP Country Edition: US, United States

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: BE, Belgium

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: NL, Netherlands

xxx.zzz.yyy.bbb GeoIP Country Edition: NO, Norway

xxx.zzz.yyy.bbb GeoIP Country Edition: FI, Finland

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

3 FR, France

3 DE, Germany

2 RU, Russian Federation

1 US, United States

1 NL, Netherlands

1 IP Address not found

1 NO, Norway

1 FI, Finland

1 SE, Sweden

1 CA, Canada

1 BR, Brazil

1 BE, Belgium

1 AR, Argentina

Total: 18

I wrote this script when I noticed Wiki SPAM and concluded that SPAM originated from a single Bot master but of course I was unable to figure out which one. The script can still be useful from times to times.

SPAM-ips.rb

I'm sharing a small script that allows to scan IPs against Whois and GeoIP databases. It allows to quickly retrieve the geolocation of the IPs and print statistics, so that you know from where the connections are originating from. The Whois information is stored inside text files named whois.xxx.yyy.zzz.bbb.You can download the script here.

Example:

• Usage

$ spam-ips.rb --help

Usage: /home/mike/.local/bin/spam-ips.rb ip|filename [[ip|filename] ...]

• First we retrieve some IPs

$ awk '{print $6}' /var/log/httpd/access.log > /tmp/ip-list.txt

• Now we run the script with the list of IPs inside the text file

$ cd /tmp

$ spam-ips.rb ip-list.txt

Scanning 18 IPs... done.

xxx.zzz.yyy.bbb GeoIP Country Edition: IP Address not found

xxx.zzz.yyy.bbb GeoIP Country Edition: BR, Brazil

xxx.zzz.yyy.bbb GeoIP Country Edition: AR, Argentina

xxx.zzz.yyy.bbb GeoIP Country Edition: SE, Sweden

xxx.zzz.yyy.bbb GeoIP Country Edition: CA, Canada

xxx.zzz.yyy.bbb GeoIP Country Edition: US, United States

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: BE, Belgium

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: NL, Netherlands

xxx.zzz.yyy.bbb GeoIP Country Edition: NO, Norway

xxx.zzz.yyy.bbb GeoIP Country Edition: FI, Finland

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

3 FR, France

3 DE, Germany

2 RU, Russian Federation

1 US, United States

1 NL, Netherlands

1 IP Address not found

1 NO, Norway

1 FI, Finland

1 SE, Sweden

1 CA, Canada

1 BR, Brazil

1 BE, Belgium

1 AR, Argentina

Total: 18

I wrote this script when I noticed Wiki SPAM and concluded that SPAM originated from a single Bot master but of course I was unable to figure out which one. The script can still be useful from times to times.

XTerm as root-tail

The idea behind this title is to use XTerm as a log viewer over the desktop, just like root-tail works. The tool root-tail paints text on the root window by default or any other XWindow when used with the-id parameter.Using XTerm comes with little advantage, it is possible to scroll into the “backlog” and make text selections. On a downside, it won't let you click through into the desktop, therefore it is rather useful for people without desktop icons for example.

We will proceed with a first simple example, by writing a Shell script that will use the combo DevilsPie and XTerm. The terminals will all be kept in the background below other windows and never take the focus thanks to DevilsPie. DevilsPie is a tool watching the creation of new windows and applies special rules over them.

Obviously, you need to install the command line tool

devilspie. It's a command to run in the background as a daemon. Configuration files with a .ds extensions contain matches for windows and rules that are put within the ~/.devilspie directory.First example

The first example shows how to match only one specific XTerm window.The DevilsPie configuration:

- DesktopLog.ds

(if

(is (window_class) "DesktopLog")

(begin

(wintype "dock")

(geometry "+20+45")

(below)

(undecorate)

(skip_pager)

(opacity 80)

)

)

devilspie is running, and spawning a single xterm process:- desktop-log.sh

#!/bin/sh

test `pidof devilspie` || devilspie &

xterm -geometry 164x73 -uc -class DesktopLog -T daemon.log -e sudo tail -f /var/log/daemon.log &

To try the example, save the DevilsPie snippet inside the directory

~/.devilspie, and download and execute the Shell script. Make sure to quit any previous DevilsPie process whenever you modify or install a new .ds file.Second example

The second example is a little more complete, it starts three terminals of which one is coloured in black.- DesktopLog.ds

(if

(matches (window_class) "DesktopLog[0-9]+")

(begin

(wintype "dock")

(below)

(undecorate)

(skip_pager)

(opacity 80)

)

)

(if

(is (window_class) "DesktopLog1")

(geometry "+480+20")

)

(if

(is (window_class) "DesktopLog2")

(geometry "+20+20")

)

(if

(is (window_class) "DesktopLog3")

(geometry "+20+330")

)

- desktop-log.sh

#!/bin/sh

test `pidof devilspie` || devilspie &

xterm -geometry 88x40 -uc -class DesktopLog1 -T daemon.log -e sudo -s tail -f /var/log/daemon.log &

xterm -geometry 70x20 -uc -class DesktopLog2 -T auth.log -e sudo -s tail -f /var/log/auth.log &

xterm -fg grey -bg black -geometry 70x16 -uc -class DesktopLog3 -T pacman.log -e sudo -s tail -f /var/log/pacman.log &

NB: You will probably notice that setting the geometry is awkward, specially since position and size are in two different files, getting it right needs several tweakings.

This blog post was cross-posted to the Xfce Wiki.

CLI tool to review PO files

If there is something annoying about reviewing PO files is that it is impossible. When there are two hundred messages in a PO file, how are you going to know which messages changed? Well, that's the way it works currently for Transifex but there are very good news, first a review board is already available which is a good step forward but second it is going to get some good kick to make it awesome. But until this happens, I have written two scripts to make such a review.A shell script msgdiff.sh

Pros: tools available on every systemCons: ugly output, needs template file

#!/bin/sh

PO_ORIG=$1

PO_REVIEW=$2

PO_TEMPL=$3

MSGMERGE=msgmerge

DIFF=diff

PAGER=more

RM=/bin/rm

MKTEMP=mktemp

# Usage

if test "$1" = "" -o "$2" = "" -o "$3" = ""; then

echo Usage: $0 orig.po review.po template.pot

exit 1

fi

# Merge

TMP_ORIG=`$MKTEMP po-orig.XXX`

TMP_REVIEW=`$MKTEMP po-review.XXX`

$MSGMERGE $PO_ORIG $PO_TEMPL > $TMP_ORIG 2> /dev/null

$MSGMERGE $PO_REVIEW $PO_TEMPL > $TMP_REVIEW 2> /dev/null

# Diff

$DIFF -u $TMP_ORIG $TMP_REVIEW | $PAGER

# Clean up files

$RM $TMP_ORIG $TMP_REVIEW

Example:

$ ./msgdiff.sh fr.po fr.review.po thunar.pot

[...]

#: ../thunar-vcs-plugin/tvp-git-action.c:265

-#, fuzzy

msgid "Menu|Bisect"

-msgstr "Différences détaillées"

+msgstr "Menu|Couper en deux"

#: ../thunar-vcs-plugin/tvp-git-action.c:265

msgid "Bisect"

-msgstr ""

+msgstr "Couper en deux"

[...]

A Python script podiff.py

Pros: programmable output

Cons: external dependency

The script depends on polib that can be installed with the setuptools scripts. Make sure setuptools is installed and than run the command

sudo easy_install polib.#!/usr/bin/env python

import polib

def podiff(path_po_orig, path_po_review):

po_orig = polib.pofile(path_po_orig)

po_review = polib.pofile(path_po_review)

po_diff = polib.POFile()

po_diff.header = "PO Diff Header"

for entry in po_review:

orig_entry = po_orig.find(entry.msgid)

if not entry.obsolete and (orig_entry.msgstr != entry.msgstr \

or ("fuzzy" in orig_entry.flags) != ("fuzzy" in entry.flags)):

po_diff.append(entry)

return po_diff

if __name__ == "__main__":

import sys

import os.path

# Usage

if len(sys.argv) != 3 \

or not os.path.isfile(sys.argv[1]) \

or not os.path.isfile(sys.argv[2]):

print "Usage: %s orig.po review.po" % sys.argv[0]

sys.exit(1)

# Retrieve diff

path_po_orig = sys.argv[1]

path_po_review = sys.argv[2]

po_diff = podiff(path_po_orig, path_po_review)

# Print out orig v. review messages

po = polib.pofile(path_po_orig)

for entry in po_diff:

orig_entry = po.find(entry.msgid)

orig_fuzzy = review_fuzzy = "fuzzy"

if "fuzzy" not in orig_entry.flags:

orig_fuzzy = "not fuzzy"

if "fuzzy" not in entry.flags:

review_fuzzy = "not fuzzy"

print "'%s' was %s is %s\n\tOriginal => '%s'\n\tReviewed => '%s'\n" % (entry.msgid, orig_fuzzy, review_fuzzy, orig_entry.msgstr, entry.msgstr)

Example:

$ ./podiff.py fr.po fr.review.po

'Menu|Bisect' was fuzzy is not fuzzy

Original => 'Différences détaillées'

Reviewed => 'Menu|Couper en deux'

'Bisect' was not fuzzy is not fuzzy

Original => ''

Reviewed => 'Couper en deux'

[...]

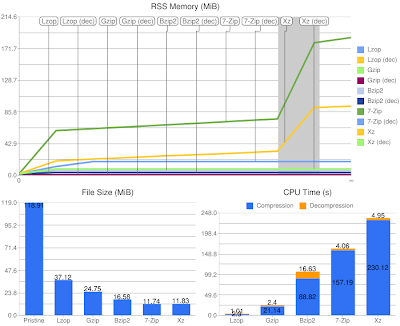

Benchmarking Compression Tools

Comparison of several compression tools: lzop, gzip, bzip2, 7zip, and xz.- Lzop: small and very fast yet good compression.

- Gzip: fast and good compression.

- Bzip2: slow for both compression and decompression although very good compression.

- 7-Zip: LZMA algorithm, slower than Bzip2 for compression but very good compression.

- Xz: LZMA2, evolution of LZMA algorithm.

Preparation

- Be skeptic about compression tools and wanna promote the compression tool

- Compare quickly old and new compression tools and find interesting results

So much for the spirit, what you really need is to write a script (Bash, Ruby, Perl, anything will do) because you will want to generate the benchmark data automatically. I picked up Ruby as it's nowadays the language of my choice when it comes to any kind of Shell-like scripts. By choosing Ruby I have a large panel of classes to process benchmarking data, for instance I have a Benchmark class (wonderful), I have a CSV class (awfully documented, redundant), and I have a zillion of Gems for any kind of tasks I would need to do (although I always avoid them).

I first focused on retrieving the data I was interested into (memory, cpu time and file size) and saving it in the CSV format. By doing so I am able to produce charts easily with existing applications, and I was thinking maybe it was possible to use GoogleCL to generate charts from the command line with Google Docs but it isn't supported (maybe it will maybe it won't, it's up to gdata-python-client). However there is an actual Google tool to generate charts, it is the Google Chart API that works by providing a URI to get an image. The Google Image Chart Editor website helps you to generate the chart you want in a friendly WYSIWYG mode, after that it is just a matter of computing the data into shape for the URI. But well while focusing on the charts I found the Ruby Gem googlecharts that makes it friendly to pass the data and save the image.

Ruby Script

The Ruby script needs the following:- It was written with Ruby 1.9

- Linux/Procfs for reading the status of processes

- Googlecharts: gem install googlecharts

- ImageMagick for the command line tool convert (optional)

The script is a bit long for being pasted here (more or less 300 lines) so you can download it from my workstation. If the link doesn't work make sure the web browser doesn't encode ~ (f.e. to "%257E"), I've seen this happening with Safari (inside my logs)! If really you are out of luck, it is available on Pastebin.

Benchmarks

The benchmarks are available for three kinds of data. Compressed media files, raw media files (image and sound, remember that the compression is lossless), and text files from an open source project.Media Files

Does it make sense at all to compress already compressed data. Obviously not! Let's take a look at what happens anyway.As you see, compression tools with focus on speed don't fail, they still do the job quick while gaining a few hundred kilo bytes. However the other tools simply waste a lot of time for no gain at all.

So always make sure to use a backup application without compression over media files or the CPU will be heating up for nothing.

Raw Media Files

Will it make sense to compress raw data? Not really. Here are the results:There is some gain in the order of mega bytes now, but the process is still the same and for that reason it is simply unadapted. For media files there are existing formats that will compress the data lossless with a higher ratio and a lot faster.

Let's compare lossless compression of a sound file. The initial WAV source file has a size of 44MB and lasts 4m20s. Compressing this file with xz takes about 90s, this is very long while it reduced the size to 36MB. Now if you choose the format FLAC, which is doing lossless compression for audio, you will have a record. The file is compressed in about 5s to a size of 24MB! The good thing about FLAC is that media players will decode it without any CPU cost.

The same happens with images, but I lack knowledge about photo formats so your mileage may vary. Anyway, except the Windows bitmap format, I'm not able to say that you will find images uncompressed just like you won't find videos uncompressed... TIFF or RAW is the format provided by many reflex cameras, it has lossless compression capabilities and contains many information about image colors and so on, this makes it the perfect format for photographers as the photo itself doesn't contain any modifications. You can also choose the PNG format but only for simple images.

Text Files

We get to the point where we can compare interesting results. Here we are compressing data that is the most commonly distributed over the Internet.Lzop and Gzip perform fast and have a good ratio. Bzip2 has a better ratio, and both LZMA and LZMA2 algorithms even better. We can use an initial archive of 10MB, 100MB, or 400MB, the charts will always look alike the one above. When choosing a compression format it will either be good compression or speed, but it will definitely never ever be both, you must choose between this two constraints.

Conclusion

I never heard about the LZO format until I wanted to write this blog post. It looks like a good choice for end-devices where CPU cost is crucial. The compression will always be extremely fast, even for giga bytes of data, with a fairly good ratio. While Gzip is the most distributed compression format, it works just like Lzop, by focusing by default on speed with good compression. But it can't beat Lzop in speed, even when compressing in level 1 it will be fairly slower in matter of seconds, although it still beats it in the final size. When compressing with Lzop in level 9, the speed is getting ridiculously slow and the final size doesn't beat Gzip with its default level where Gzip is doing the job faster anyway.Bzip2 is noise between LZMA and Gzip. It is very distributed as default format nowadays because it beats Gzip in term of compression ratio. It is of course slower for compression, but easily spottable is the decompression time, it is the worst amongst all in all cases.

Both LZMA and LZMA2 perform almost with an identical behavior. They are using dynamic memory allocation, unlike the other formats, where the higher the input data the more the memory is allocated. We can see the evolution of LZMA is using less memory but has on the other hand a higher cost on CPU time. And we can see they have excellent decompression time, although Lzop and Gzip have the best scores but then again there can't be excellent compression ratio and compression time. The difference between the compression ratio of the two formats is in the order of hundred of kilo bytes, well after all it is an evolution and not a revolution.

On a last note, I ran the benchmarks on an Intel Atom N270 that has two cores at 1.6GHz but I made sure to run the compression tools with only one core.

A few interesting links:

- RAWpository: a collection of RAW images

- LZMA vs Bzip2 at TheGeekStuff from 2010-06-04

- Benchmarks by Stéphane Lesimple with different levels from 2010-03-09

- Benchmarks by Advogato also with different levels from 2009-09-25