SPAM-ips.rb

I'm sharing a small script that allows to scan IPs against Whois and GeoIP databases. It allows to quickly retrieve the geolocation of the IPs and print statistics, so that you know from where the connections are originating from. The Whois information is stored inside text files named whois.xxx.yyy.zzz.bbb.You can download the script here.

Example:

• Usage

$ spam-ips.rb --help

Usage: /home/mike/.local/bin/spam-ips.rb ip|filename [[ip|filename] ...]

• First we retrieve some IPs

$ awk '{print $6}' /var/log/httpd/access.log > /tmp/ip-list.txt

• Now we run the script with the list of IPs inside the text file

$ cd /tmp

$ spam-ips.rb ip-list.txt

Scanning 18 IPs... done.

xxx.zzz.yyy.bbb GeoIP Country Edition: IP Address not found

xxx.zzz.yyy.bbb GeoIP Country Edition: BR, Brazil

xxx.zzz.yyy.bbb GeoIP Country Edition: AR, Argentina

xxx.zzz.yyy.bbb GeoIP Country Edition: SE, Sweden

xxx.zzz.yyy.bbb GeoIP Country Edition: CA, Canada

xxx.zzz.yyy.bbb GeoIP Country Edition: US, United States

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: BE, Belgium

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: NL, Netherlands

xxx.zzz.yyy.bbb GeoIP Country Edition: NO, Norway

xxx.zzz.yyy.bbb GeoIP Country Edition: FI, Finland

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

3 FR, France

3 DE, Germany

2 RU, Russian Federation

1 US, United States

1 NL, Netherlands

1 IP Address not found

1 NO, Norway

1 FI, Finland

1 SE, Sweden

1 CA, Canada

1 BR, Brazil

1 BE, Belgium

1 AR, Argentina

Total: 18

I wrote this script when I noticed Wiki SPAM and concluded that SPAM originated from a single Bot master but of course I was unable to figure out which one. The script can still be useful from times to times.

SPAM-ips.rb

I'm sharing a small script that allows to scan IPs against Whois and GeoIP databases. It allows to quickly retrieve the geolocation of the IPs and print statistics, so that you know from where the connections are originating from. The Whois information is stored inside text files named whois.xxx.yyy.zzz.bbb.You can download the script here.

Example:

• Usage

$ spam-ips.rb --help

Usage: /home/mike/.local/bin/spam-ips.rb ip|filename [[ip|filename] ...]

• First we retrieve some IPs

$ awk '{print $6}' /var/log/httpd/access.log > /tmp/ip-list.txt

• Now we run the script with the list of IPs inside the text file

$ cd /tmp

$ spam-ips.rb ip-list.txt

Scanning 18 IPs... done.

xxx.zzz.yyy.bbb GeoIP Country Edition: IP Address not found

xxx.zzz.yyy.bbb GeoIP Country Edition: BR, Brazil

xxx.zzz.yyy.bbb GeoIP Country Edition: AR, Argentina

xxx.zzz.yyy.bbb GeoIP Country Edition: SE, Sweden

xxx.zzz.yyy.bbb GeoIP Country Edition: CA, Canada

xxx.zzz.yyy.bbb GeoIP Country Edition: US, United States

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: BE, Belgium

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: NL, Netherlands

xxx.zzz.yyy.bbb GeoIP Country Edition: NO, Norway

xxx.zzz.yyy.bbb GeoIP Country Edition: FI, Finland

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: FR, France

xxx.zzz.yyy.bbb GeoIP Country Edition: DE, Germany

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

xxx.zzz.yyy.bbb GeoIP Country Edition: RU, Russian Federation

3 FR, France

3 DE, Germany

2 RU, Russian Federation

1 US, United States

1 NL, Netherlands

1 IP Address not found

1 NO, Norway

1 FI, Finland

1 SE, Sweden

1 CA, Canada

1 BR, Brazil

1 BE, Belgium

1 AR, Argentina

Total: 18

I wrote this script when I noticed Wiki SPAM and concluded that SPAM originated from a single Bot master but of course I was unable to figure out which one. The script can still be useful from times to times.

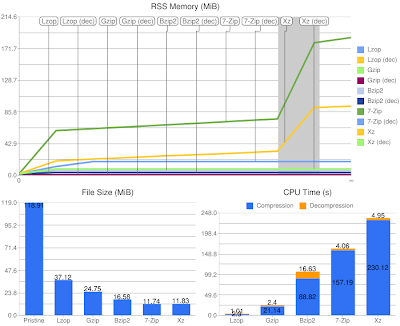

Benchmarking Compression Tools

Comparison of several compression tools: lzop, gzip, bzip2, 7zip, and xz.- Lzop: small and very fast yet good compression.

- Gzip: fast and good compression.

- Bzip2: slow for both compression and decompression although very good compression.

- 7-Zip: LZMA algorithm, slower than Bzip2 for compression but very good compression.

- Xz: LZMA2, evolution of LZMA algorithm.

Preparation

- Be skeptic about compression tools and wanna promote the compression tool

- Compare quickly old and new compression tools and find interesting results

I first focused on retrieving the data I was interested into (memory, cpu time and file size) and saving it in the CSV format. By doing so I am able to produce charts easily with existing applications, and I was thinking maybe it was possible to use GoogleCL to generate charts from the command line with Google Docs but it isn't supported (maybe it will maybe it won't, it's up to gdata-python-client). However there is an actual Google tool to generate charts, it is the Google Chart API that works by providing a URI to get an image. The Google Image Chart Editor website helps you to generate the chart you want in a friendly WYSIWYG mode, after that it is just a matter of computing the data into shape for the URI. But well while focusing on the charts I found the Ruby Gem googlecharts that makes it friendly to pass the data and save the image.

Ruby Script

The Ruby script needs the following:- It was written with Ruby 1.9

- Linux/Procfs for reading the status of processes

- Googlecharts: gem install googlecharts

- ImageMagick for the command line tool convert (optional)

The script is a bit long for being pasted here (more or less 300 lines) so you can download it from my workstation. If the link doesn't work make sure the web browser doesn't encode ~ (f.e. to "%257E"), I've seen this happening with Safari (inside my logs)! If really you are out of luck, it is available on Pastebin.

Benchmarks

The benchmarks are available for three kinds of data. Compressed media files, raw media files (image and sound, remember that the compression is lossless), and text files from an open source project.Media Files

Does it make sense at all to compress already compressed data. Obviously not! Let's take a look at what happens anyway.As you see, compression tools with focus on speed don't fail, they still do the job quick while gaining a few hundred kilo bytes. However the other tools simply waste a lot of time for no gain at all.

So always make sure to use a backup application without compression over media files or the CPU will be heating up for nothing.

Raw Media Files

Will it make sense to compress raw data? Not really. Here are the results:There is some gain in the order of mega bytes now, but the process is still the same and for that reason it is simply unadapted. For media files there are existing formats that will compress the data lossless with a higher ratio and a lot faster.

Let's compare lossless compression of a sound file. The initial WAV source file has a size of 44MB and lasts 4m20s. Compressing this file with xz takes about 90s, this is very long while it reduced the size to 36MB. Now if you choose the format FLAC, which is doing lossless compression for audio, you will have a record. The file is compressed in about 5s to a size of 24MB! The good thing about FLAC is that media players will decode it without any CPU cost.

The same happens with images, but I lack knowledge about photo formats so your mileage may vary. Anyway, except the Windows bitmap format, I'm not able to say that you will find images uncompressed just like you won't find videos uncompressed... TIFF or RAW is the format provided by many reflex cameras, it has lossless compression capabilities and contains many information about image colors and so on, this makes it the perfect format for photographers as the photo itself doesn't contain any modifications. You can also choose the PNG format but only for simple images.

Text Files

We get to the point where we can compare interesting results. Here we are compressing data that is the most commonly distributed over the Internet.Lzop and Gzip perform fast and have a good ratio. Bzip2 has a better ratio, and both LZMA and LZMA2 algorithms even better. We can use an initial archive of 10MB, 100MB, or 400MB, the charts will always look alike the one above. When choosing a compression format it will either be good compression or speed, but it will definitely never ever be both, you must choose between this two constraints.

Conclusion

I never heard about the LZO format until I wanted to write this blog post. It looks like a good choice for end-devices where CPU cost is crucial. The compression will always be extremely fast, even for giga bytes of data, with a fairly good ratio. While Gzip is the most distributed compression format, it works just like Lzop, by focusing by default on speed with good compression. But it can't beat Lzop in speed, even when compressing in level 1 it will be fairly slower in matter of seconds, although it still beats it in the final size. When compressing with Lzop in level 9, the speed is getting ridiculously slow and the final size doesn't beat Gzip with its default level where Gzip is doing the job faster anyway.Bzip2 is noise between LZMA and Gzip. It is very distributed as default format nowadays because it beats Gzip in term of compression ratio. It is of course slower for compression, but easily spottable is the decompression time, it is the worst amongst all in all cases.

Both LZMA and LZMA2 perform almost with an identical behavior. They are using dynamic memory allocation, unlike the other formats, where the higher the input data the more the memory is allocated. We can see the evolution of LZMA is using less memory but has on the other hand a higher cost on CPU time. And we can see they have excellent decompression time, although Lzop and Gzip have the best scores but then again there can't be excellent compression ratio and compression time. The difference between the compression ratio of the two formats is in the order of hundred of kilo bytes, well after all it is an evolution and not a revolution.

On a last note, I ran the benchmarks on an Intel Atom N270 that has two cores at 1.6GHz but I made sure to run the compression tools with only one core.

A few interesting links:

- RAWpository: a collection of RAW images

- LZMA vs Bzip2 at TheGeekStuff from 2010-06-04

- Benchmarks by Stéphane Lesimple with different levels from 2010-03-09

- Benchmarks by Advogato also with different levels from 2009-09-25

Benchmarking Compression Tools

Comparison of several compression tools: lzop, gzip, bzip2, 7zip, and xz.- Lzop: small and very fast yet good compression.

- Gzip: fast and good compression.

- Bzip2: slow for both compression and decompression although very good compression.

- 7-Zip: LZMA algorithm, slower than Bzip2 for compression but very good compression.

- Xz: LZMA2, evolution of LZMA algorithm.

Preparation

- Be skeptic about compression tools and wanna promote the compression tool

- Compare quickly old and new compression tools and find interesting results

I first focused on retrieving the data I was interested into (memory, cpu time and file size) and saving it in the CSV format. By doing so I am able to produce charts easily with existing applications, and I was thinking maybe it was possible to use GoogleCL to generate charts from the command line with Google Docs but it isn't supported (maybe it will maybe it won't, it's up to gdata-python-client). However there is an actual Google tool to generate charts, it is the Google Chart API that works by providing a URI to get an image. The Google Image Chart Editor website helps you to generate the chart you want in a friendly WYSIWYG mode, after that it is just a matter of computing the data into shape for the URI. But well while focusing on the charts I found the Ruby Gem googlecharts that makes it friendly to pass the data and save the image.

Ruby Script

The Ruby script needs the following:- It was written with Ruby 1.9

- Linux/Procfs for reading the status of processes

- Googlecharts: gem install googlecharts

- ImageMagick for the command line tool convert (optional)

The script is a bit long for being pasted here (more or less 300 lines) so you can download it from my workstation. If the link doesn't work make sure the web browser doesn't encode ~ (f.e. to "%257E"), I've seen this happening with Safari (inside my logs)! If really you are out of luck, it is available on Pastebin.

Benchmarks

The benchmarks are available for three kinds of data. Compressed media files, raw media files (image and sound, remember that the compression is lossless), and text files from an open source project.Media Files

Does it make sense at all to compress already compressed data. Obviously not! Let's take a look at what happens anyway.As you see, compression tools with focus on speed don't fail, they still do the job quick while gaining a few hundred kilo bytes. However the other tools simply waste a lot of time for no gain at all.

So always make sure to use a backup application without compression over media files or the CPU will be heating up for nothing.

Raw Media Files

Will it make sense to compress raw data? Not really. Here are the results:There is some gain in the order of mega bytes now, but the process is still the same and for that reason it is simply unadapted. For media files there are existing formats that will compress the data lossless with a higher ratio and a lot faster.

Let's compare lossless compression of a sound file. The initial WAV source file has a size of 44MB and lasts 4m20s. Compressing this file with xz takes about 90s, this is very long while it reduced the size to 36MB. Now if you choose the format FLAC, which is doing lossless compression for audio, you will have a record. The file is compressed in about 5s to a size of 24MB! The good thing about FLAC is that media players will decode it without any CPU cost.

The same happens with images, but I lack knowledge about photo formats so your mileage may vary. Anyway, except the Windows bitmap format, I'm not able to say that you will find images uncompressed just like you won't find videos uncompressed... TIFF or RAW is the format provided by many reflex cameras, it has lossless compression capabilities and contains many information about image colors and so on, this makes it the perfect format for photographers as the photo itself doesn't contain any modifications. You can also choose the PNG format but only for simple images.

Text Files

We get to the point where we can compare interesting results. Here we are compressing data that is the most commonly distributed over the Internet.Lzop and Gzip perform fast and have a good ratio. Bzip2 has a better ratio, and both LZMA and LZMA2 algorithms even better. We can use an initial archive of 10MB, 100MB, or 400MB, the charts will always look alike the one above. When choosing a compression format it will either be good compression or speed, but it will definitely never ever be both, you must choose between this two constraints.

Conclusion

I never heard about the LZO format until I wanted to write this blog post. It looks like a good choice for end-devices where CPU cost is crucial. The compression will always be extremely fast, even for giga bytes of data, with a fairly good ratio. While Gzip is the most distributed compression format, it works just like Lzop, by focusing by default on speed with good compression. But it can't beat Lzop in speed, even when compressing in level 1 it will be fairly slower in matter of seconds, although it still beats it in the final size. When compressing with Lzop in level 9, the speed is getting ridiculously slow and the final size doesn't beat Gzip with its default level where Gzip is doing the job faster anyway.Bzip2 is noise between LZMA and Gzip. It is very distributed as default format nowadays because it beats Gzip in term of compression ratio. It is of course slower for compression, but easily spottable is the decompression time, it is the worst amongst all in all cases.

Both LZMA and LZMA2 perform almost with an identical behavior. They are using dynamic memory allocation, unlike the other formats, where the higher the input data the more the memory is allocated. We can see the evolution of LZMA is using less memory but has on the other hand a higher cost on CPU time. And we can see they have excellent decompression time, although Lzop and Gzip have the best scores but then again there can't be excellent compression ratio and compression time. The difference between the compression ratio of the two formats is in the order of hundred of kilo bytes, well after all it is an evolution and not a revolution.

On a last note, I ran the benchmarks on an Intel Atom N270 that has two cores at 1.6GHz but I made sure to run the compression tools with only one core.

A few interesting links:

- RAWpository: a collection of RAW images

- LZMA vs Bzip2 at TheGeekStuff from 2010-06-04

- Benchmarks by Stéphane Lesimple with different levels from 2010-03-09

- Benchmarks by Advogato also with different levels from 2009-09-25

Eatmonkey 0.1.3 benchmarking

Eatmonkey has now been released for the 4th time and I started to use it to download videos from FOSDEM2010 by drag-n-dropping the links from the web page to the manager :-)I downloaded four files and while they were running I had a close look at top and iftop to monitor the CPU usage and the bandwidth usage between the client/server (the connection between eatmonkey and the aria2 XML-RPC server running on the localhost interface).

I had unexpected results and was surprised by the CPU usage. It is very high currently which means I have a new task for the next milestone, getting the CPU footprint low. The bandwidth comes without surprises, but since the milestone will target performance where possible I will fine down the number of requests made to the server. This problem is also noticeable in the GUI in that it tends to micro-freeze during the updates of each download. So the more active downloads will be running the more the client will be freezing.

Some results as it will speak more than words:

| Number of active downloads | Reception | Emission | CPU% |

|---|---|---|---|

| 4 downloads | 144Kbps | 18Kbps | 30% |

| 3 downloads | 108Kbps | 14Kbps | 26% |

| 2 downloads | 73Kbps | 11Kbps | 18% |

I will start by running benchmarks on the code itself, and thanks to Ruby there is built-in support for Benchmarking and Profiling. It comes with at least three different useful modules: benchmark, profile and profiler. The first measures the time that the code necessitated to be executed on the system. It is useful to measure different kind of loops like for, while or do...while, or for example to see if a string is best to be compared through a dummy compare function or via a compiled regular expression. The second simply needs to be included at the top of a Ruby script and it will print a summary of the time passed within each method/function call. The third does the same except it is possible to run the Profiler around distinctive blocks of code. So much for the presentation, below are some samples.

File

benchmark.rb:#!/usr/bin/ruby -w

require "benchmark"

require "pp"

integers = (1..10000).to_a

pp Benchmark.measure { integers.map { |i| i * i } }

Benchmark.bm(10) do |b|

b.report("simple") { 50000.times { 1 + 2 } }

b.report("complex") { 50000.times { 1 + 2 - 6 + 5 * 4 / 2 + 4 } }

b.report("stupid") { 50000.times { "1".to_i + "3".to_i * "4".to_i - "2".to_i } }

end

words = IO.readlines("/usr/share/dict/words")

Benchmark.bm(10) do |b|

b.report("include") { words.each { |w| next if w.include?("abe") } }

b.report("regexp") { words.each { |w| next if w =~ /abe/ } }

end

File

profile.rb:#!/usr/bin/ruby -w

require "profile"

def factorial(n)

n > 1 ? n * factorial(n - 1) : 1;

end

factorial(627)

File

profiler.rb:#!/usr/bin/ruby -wUpdate: The profiling showed that during a status request 65% of the time is consumed by the XML parser. The REXML class is written 100% in Ruby, and that gives a good hint that the same request done with a parser written in C may present a real boost. On another hand, the requests are now only run once periodically and cached inside the pooler. This means that the emission bitrate is always the same and that the reception bitrate grows as there are more downloads running. And as a side-effect there is less XML parsing done thus less CPU time used.

require "profiler"

def factorial(n)

(2..n).to_a.inject(1) { |product, i| product * i }

end

Profiler__.start_profile

factorial(627)

Profiler__.stop_profile

Profiler__.print_profile($stdout)

Eatmonkey 0.1.3 benchmarking

Eatmonkey has now been released for the 4th time and I started to use it to download videos from FOSDEM2010 by drag-n-dropping the links from the web page to the manager :-)I downloaded four files and while they were running I had a close look at top and iftop to monitor the CPU usage and the bandwidth usage between the client/server (the connection between eatmonkey and the aria2 XML-RPC server running on the localhost interface).

I had unexpected results and was surprised by the CPU usage. It is very high currently which means I have a new task for the next milestone, getting the CPU footprint low. The bandwidth comes without surprises, but since the milestone will target performance where possible I will fine down the number of requests made to the server. This problem is also noticeable in the GUI in that it tends to micro-freeze during the updates of each download. So the more active downloads will be running the more the client will be freezing.

Some results as it will speak more than words:

| Number of active downloads | Reception | Emission | CPU% |

|---|---|---|---|

| 4 downloads | 144Kbps | 18Kbps | 30% |

| 3 downloads | 108Kbps | 14Kbps | 26% |

| 2 downloads | 73Kbps | 11Kbps | 18% |

I will start by running benchmarks on the code itself, and thanks to Ruby there is built-in support for Benchmarking and Profiling. It comes with at least three different useful modules: benchmark, profile and profiler. The first measures the time that the code necessitated to be executed on the system. It is useful to measure different kind of loops like for, while or do...while, or for example to see if a string is best to be compared through a dummy compare function or via a compiled regular expression. The second simply needs to be included at the top of a Ruby script and it will print a summary of the time passed within each method/function call. The third does the same except it is possible to run the Profiler around distinctive blocks of code. So much for the presentation, below are some samples.

File

benchmark.rb:#!/usr/bin/ruby -w

require "benchmark"

require "pp"

integers = (1..10000).to_a

pp Benchmark.measure { integers.map { |i| i * i } }

Benchmark.bm(10) do |b|

b.report("simple") { 50000.times { 1 + 2 } }

b.report("complex") { 50000.times { 1 + 2 - 6 + 5 * 4 / 2 + 4 } }

b.report("stupid") { 50000.times { "1".to_i + "3".to_i * "4".to_i - "2".to_i } }

end

words = IO.readlines("/usr/share/dict/words")

Benchmark.bm(10) do |b|

b.report("include") { words.each { |w| next if w.include?("abe") } }

b.report("regexp") { words.each { |w| next if w =~ /abe/ } }

end

File

profile.rb:#!/usr/bin/ruby -w

require "profile"

def factorial(n)

n > 1 ? n * factorial(n - 1) : 1;

end

factorial(627)

File

profiler.rb:#!/usr/bin/ruby -wUpdate: The profiling showed that during a status request 65% of the time is consumed by the XML parser. The REXML class is written 100% in Ruby, and that gives a good hint that the same request done with a parser written in C may present a real boost. On another hand, the requests are now only run once periodically and cached inside the pooler. This means that the emission bitrate is always the same and that the reception bitrate grows as there are more downloads running. And as a side-effect there is less XML parsing done thus less CPU time used.

require "profiler"

def factorial(n)

(2..n).to_a.inject(1) { |product, i| product * i }

end

Profiler__.start_profile

factorial(627)

Profiler__.stop_profile

Profiler__.print_profile($stdout)

Backward compatibility for Ruby 1.8

As I'm currently writing some Ruby code and that I started with version 1.9 I felt onto cases where some methods don't exist for Ruby 1.8. This is very annoying and I started by switching the code to 1.8 method calls. I disliked this when it came to Process.spawn which is a one line call to execute a separate process. Rewriting it takes around 5 lines instead.So I had the idea to reuse something I already saw once. I write a new file named compat18.rb and include it within the sources that need it. Ruby makes it very easy to add new methods to existing classes/modules anyway, even if they exist already, so I just did it and it works like a charm.

Here is a small snippet:

class Array

def find_index(idx)

index(idx)

end

end

class Dir

def exists?(path)

File.directory?(path)

end

end

Update: It can happen that a fallback method from Ruby 1.8 has been totally dropped and replaced against a new method in 1.9, and in this case the older method has to be checked if it exists, and otherwise make a call to the parent.

class Array

def count

if defined? nitems

return nitems

else

return super

end

end

end

The download manager is in the wild

So it's finally done, it took very long, but it's done. The download manager I once had in mind is taking off into the wildness :-) Of course it took long because I never did something with it, writing a front-end to wget/curl isn't interesting -- who cares about downloading HTTP/FTP files when the web browser handles it for you anyway -- and reusing GVFS doesn't make sense cause really you don't want to download from your trash:// or whatever proto:// and again only HTTP/FTP is not interesting. Not at all. I have come across Uget and other very good projects but most of them are either writing the code to handle the protocol like HTTP and/or are looking forward to handle more interesting protocols like BitTorrent. I think it's a very tough job that demands too much for a one-maintainer project. Recently I saw the new release of aria2 that comes with an XML-RPC interface and this took all my interest during 4 days. I believe this utility is very promising and I had really like to write the good and user-friendly XML-RPC GUI client that it seems to be missing!What is so exciting about aria2? In case you know the project you don't have to read, but it is worth mentionning the features of this small utility. It supports HTTP(s)/FTP but also BitTorrent and Metalinks. It is widely customizable for each specific protocol. It can download one file by splitting it into several pieces and using multiple connections and even mix HTTP URIs with BitTorrent and by the same time upload to BitTorrent peers what has been downloaded through HTTP. So this has to be the perfect candidate to write a nice download manager, hasn't it?

The client is a very first version that I intended to code name draft although the release assistant on xfce.org doesn't allow this. Instead it will take the more neutral road of 0.1.0 to 0.1.1 etc until 0.2.0 followed by stable fix releases.

Why draft? Simple. It's being written with a higher level language than C but not even Vala :-) High-level languages are a great deal when starting a new application, as you can type more and get more, instead of typing like a dog for a rocking hot, well lousy, window. Since I do like Ruby, it's being written in Ruby currently, and it depends on the ruby-gnome2 project for the bindings. To get a picture, a main file to open a window takes 3 lines. Of course the final version is meant to be written in Vala/C, but I still need to convince myself that Vala+libsoup isn't an option that is going to waste too much time. Also at first glance libsoup looks easy to use, it allows to build XML-RPC requests, to request the HTTP bodies and to send messages, but it is not an XML-RPC client and you never know how well the Vala bindings will play. This means extra attention for small things. Starting an application from scratch with such constraints are usually a big time-killer therefore using like in this case an existing XML-RPC client is very important. The GUI is done with Glade in GtkBuilder format and reusing it into a new language will be pretty easy.

So what's next? I'll just wait for some feedback see what the audience thinks about it, if at all, and polish here and there. Keep tuned for the next update.

Ruby for Web Development

I recently started a new web dev project, and decided to use it to better learn Ruby. However, I don’t want to use Rails. I’d like to keep it simple. I also prefer to know a lot about the inner workings of a particular technology before I go and use a large framework that hides a bunch of details from me.

However, I want to use ActiveRecord.

So, ActiveRecord. I install it on my laptop with “emerge ruby-activerecord”, and there I go. One “require ‘activerecord’” in my script later, and, awesome, it starts working.

Then I start working on my web host (DreamHost, if you’re wondering). It can’t find ActiveRecord. But it’s obviously installed, because I know DH supports Rails out of the box, and I don’t think you can have an install of Rails without ActiveRecord. So I poke, and then realise it might be installed as a Ruby “gem.” Ok, so I put a “require ‘rubygems’” above my activerecord require. Nice, now it works. Then I think, well, what if I put this somewhere that doesn’t require rubygems? Not hard to work around automatically:

``begin require 'activerecord' rescue LoadError require 'rubygems' require 'activerecord' end``

Nice, ok, that works. It’s probably a foolish micro-optimisation, but whatever.

Then I notice… ugh, this is super slow. Even on the web host, it can take a good two seconds for the “require ‘activerecord’” statement to execute. Yeah, I know, ruby is kinda slow. But 2 extra seconds each time someone hits basically any page of the website? Ugh.

So…. FastCGI. I know DH supports it, so I head over to the control panel and enable it for the domain I’m working on, and start googling around to figure out how to use FastCGI in a ruby script.

Unfortunately, there aren’t too many resources on this. Fortunately I found a couple sample dispatch scripts, one of which I ended up basing mine off of.

But then there was a problem. Inside my app, I use ruby’s CGI class to access CGI form variables and other stuff. Since the FastCGI stuff overrides and partially replaces ruby’s internal CGI class, there’s a problem. Doing “cgi = CGI.new” inside a ruby script that’s being served through FastCGI throws a weird exception. But I wanted to try to retain compatibility for non-FastCGI mode. And I couldn’t figure out how to get the ‘cgi’ variable from the ruby dispatch script into my app’s script, since I was using ‘eval’ to run my script. The dispatch script I saw used some weird Binding voodoo. Up at the top level we have:

``def getBinding(cgi, env) return binding end``

I had no idea what that was doing, so I looked it up. Apparently the built-in “binding” function returns a Binding object that describes the current execution context, including local variables and function/method arguments. Ok, that seems really powerful and cool. So I look down to the sample dispatch script’s eval statement, and I see:

``eval File.open(script).read, getBinding(cgi, cgi.env_table)``

Ok, so it appears it tries to eval the script while providing an execution context that contains just ‘cgi’ and one of its member vars. I only sorta understand this. So I ditched the “cgi = CGI.new” line in my app’s script. But I got a NameError when just trying to use ‘cgi’. Huh? What’s going on? So I get rid of the getBinding() call entirely, and just let it use the current execution context, and suddenly everything works right. Weird.

Well, sorta. Now, remember, I want to preserve compatibility with running as a normal CGI. So the normal CGI needs to create its own ‘cgi’ object, but the FastCGI one should just use the one from the dispatch script. So I came up with this:

``begin

if !cgi.nil?

mycgi = cgi

end

rescue NameError

require 'cgi'

mycgi = CGI.new

end``

Ok, that seemed to work ok. After that block, ‘mycgi’ should be usable as a CGI/FCGI::CGI object regardless of which mode it’s running under.

So I play around a bit more, and suddenly notice that my POST requests have stopped working. I dig into it a bit, and realise that my POST requests are actually just fine. What’s happening is that, somehow, the FCGI::CGI object completely ignores $QUERY_STRING on a POST request, while ruby’s normal CGI object will take care of it and merge it with the POST data variables. You see, to make my URLs pretty, I have normal URLs rewritten such that the script sees “page=whatever” in the query string. So when I did a POST, the page= would get lost, and so the POST would end up fetching the root web page rather than the one that should be receiving the form variables. I’m not sure if this is “normal” behavior, or if the version of the fcgi ruby module on DreamHost has a bug. Regardless, we need a workaround. So I go back to my last code snippet, and hack something together:

``begin

if !cgi.nil?

if cgi.env_table['REQUEST_METHOD'] == 'POST'

CGI.parse(cgi.env_table['QUERY_STRING']).each do |k,v|

cgi.params[k] = v

end

end

mycgi = cgi

end

rescue NameError

require 'cgi'

mycgi = CGI.new

end``

Ick. But at least it works.

So far, I’m liking ruby quite a lot. It’s a beautiful language, and seems well-suited for this kind of work, especially since I want to get something that works up and running relatively quickly.

We’ll see, however, how many more gotchas I run into.

Ruby for Web Development

I recently started a new web dev project, and decided to use it to better learn Ruby. However, I don’t want to use Rails. I’d like to keep it simple. I also prefer to know a lot about the inner workings of a particular technology before I go and use a large framework that hides a bunch of details from me.

However, I want to use ActiveRecord.

So, ActiveRecord. I install it on my laptop with “emerge ruby-activerecord”, and there I go. One “require ‘activerecord’” in my script later, and, awesome, it starts working.

Then I start working on my web host (DreamHost, if you’re wondering). It can’t find ActiveRecord. But it’s obviously installed, because I know DH supports Rails out of the box, and I don’t think you can have an install of Rails without ActiveRecord. So I poke, and then realise it might be installed as a Ruby “gem.” Ok, so I put a “require ‘rubygems’” above my activerecord require. Nice, now it works. Then I think, well, what if I put this somewhere that doesn’t require rubygems? Not hard to work around automatically:

begin

require 'activerecord'

rescue LoadError

require 'rubygems'

require 'activerecord'

endNice, ok, that works. It’s probably a foolish micro-optimisation, but whatever.

Then I notice… ugh, this is super slow. Even on the web host, it can take a good two seconds for the “require ‘activerecord’” statement to execute. Yeah, I know, ruby is kinda slow. But 2 extra seconds each time someone hits basically any page of the website? Ugh.

So…. FastCGI. I know DH supports it, so I head over to the control panel and enable it for the domain I’m working on, and start googling around to figure out how to use FastCGI in a ruby script.

Unfortunately, there aren’t too many resources on this. Fortunately I found a couple sample dispatch scripts, one of which I ended up basing mine off of.

But then there was a problem. Inside my app, I use ruby’s CGI class to access CGI form variables and other stuff. Since the FastCGI stuff overrides and partially replaces ruby’s internal CGI class, there’s a problem. Doing “cgi = CGI.new” inside a ruby script that’s being served through FastCGI throws a weird exception. But I wanted to try to retain compatibility for non-FastCGI mode. And I couldn’t figure out how to get the ‘cgi’ variable from the ruby dispatch script into my app’s script, since I was using ‘eval’ to run my script. The dispatch script I saw used some weird Binding voodoo. Up at the top level we have:

def getBinding(cgi, env)

return binding

endI had no idea what that was doing, so I looked it up. Apparently the built-in “binding” function returns a Binding object that describes the current execution context, including local variables and function/method arguments. Ok, that seems really powerful and cool. So I look down to the sample dispatch script’s eval statement, and I see:

eval File.open(script).read, getBinding(cgi, cgi.env_table)Ok, so it appears it tries to eval the script while providing an execution context that contains just ‘cgi’ and one of its member vars. I only sorta understand this. So I ditched the “cgi = CGI.new” line in my app’s script. But I got a NameError when just trying to use ‘cgi’. Huh? What’s going on? So I get rid of the getBinding() call entirely, and just let it use the current execution context, and suddenly everything works right. Weird.

Well, sorta. Now, remember, I want to preserve compatibility with running as a normal CGI. So the normal CGI needs to create its own ‘cgi’ object, but the FastCGI one should just use the one from the dispatch script. So I came up with this:

begin

if !cgi.nil?

mycgi = cgi

end

rescue NameError

require 'cgi'

mycgi = CGI.new

endOk, that seemed to work ok. After that block, ‘mycgi’ should be usable as a CGI/FCGI::CGI object regardless of which mode it’s running under.

So I play around a bit more, and suddenly notice that my POST requests have stopped working. I dig into it a bit, and realise that my POST requests are actually just fine. What’s happening is that, somehow, the FCGI::CGI object completely ignores $QUERY_STRING on a POST request, while ruby’s normal CGI object will take care of it and merge it with the POST data variables. You see, to make my URLs pretty, I have normal URLs rewritten such that the script sees “page=whatever” in the query string. So when I did a POST, the page= would get lost, and so the POST would end up fetching the root web page rather than the one that should be receiving the form variables. I’m not sure if this is “normal” behavior, or if the version of the fcgi ruby module on DreamHost has a bug. Regardless, we need a workaround. So I go back to my last code snippet, and hack something together:

begin

if !cgi.nil?

if cgi.env_table['REQUEST_METHOD'] == 'POST'

CGI.parse(cgi.env_table['QUERY_STRING']).each do |k,v|

cgi.params[k] = v

end

end

mycgi = cgi

end

rescue NameError

require 'cgi'

mycgi = CGI.new

endIck. But at least it works.

So far, I’m liking ruby quite a lot. It’s a beautiful language, and seems well-suited for this kind of work, especially since I want to get something that works up and running relatively quickly.

We’ll see, however, how many more gotchas I run into.